Reference Codes

Alya

Alya is a high-performance computational mechanics code to solve complex coupled multi-physics / multi-scale / multi-domain problems, which are mostly coming from the engineering realm. Alya is developed by the Alya Dev Team, which is jointly formed by researchers and developers of BSC and ELEM Biotech. Among the different physics solved by Alya, we can mention incompressible/compressible flows, non-linear solid mechanics, chemistry, particle transport, heat transfer, turbulence modeling, electrical propagation, etc.

From scratch, Alya was specially designed for massively parallel supercomputers, and the parallelization embraces four levels of the computer hierarchy. 1) A substructuring technique with MPI as the message passing library is used for distributed memory supercomputers. 2) At the node level, both loop and task parallelisms are considered using OpenMP as an alternative to MPI. Dynamic load balance techniques have been introduced as well to better exploit computational resources at the node level. 3) At the CPU level, some kernels are also designed to enable vectorization. 4) Finally, accelerators like GPU are also exploited through OpenACC pragmas or with CUDA to further enhance the performance of the code on heterogeneous computers.

Multiphysics coupling is achieved following a multi-code strategy, relating different instances of Alya. MPI is used to communicate between the different instances, where each instance solves a particular physics. This powerful technique enables asynchronous execution of the different physics. Thanks to a careful programming strategy, coupled problems can be solved, retaining the scalability properties of the individual instances.

The code is one of the two CFD codes of the Unified European Applications Benchmark Suite (UEABS) as well as the Accelerator benchmark suite of PRACE. Alya is presently involved in five centers of excellence, RAISE, EoCoE-2, CompbioMed2, Excellerat and CoEC.

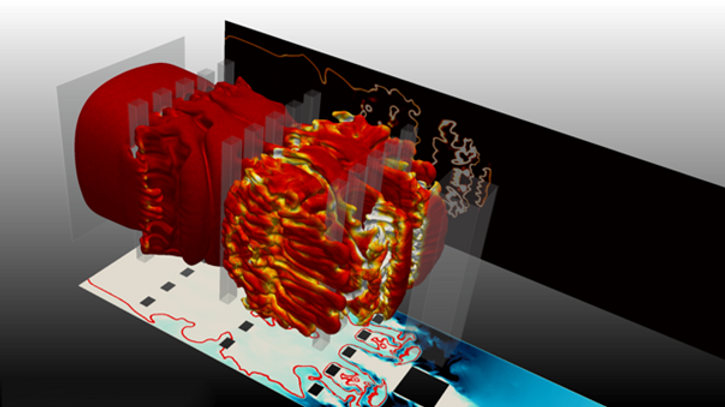

Figure 1: Turbulence on a wind farm and LES simulations of a wind turbine using an actuator disc.

AVBP

AVBP is an LES (Large Eddy Simulation) code dedicated to unsteady compressible flows in complex geometries with combustion or without combustion. It is applied to combustion chambers, turbo machinery, safety analysis, optimization of combustors, pollutant formation (CO, NO, soot), UQ analysis. AVBP uses a high-order Taylor Galerkin scheme on hybrid meshes for multi species perfect of real gases. Its spatial accuracy on unstructured hybrid meshes is 3 (4 on regular meshes). The AVBP formulation is fully compressible and allows to investigate compressible combustion problems such as thermoacoustic instabilities (where acoustics are important) or detonation engines (where combustion and shock must be computed simultaneously).

Explosions in building: LES with the high-fidelity solver AVBP on an INCITE machine: 1 billion cells. A premixed flame propagates from the left to the right side of the picture and increases speed when it meets obstacles and generates turbulence. See many more examples and movies here. in the field of combustion and turbo machinery

AVBP is a world standard for LES of combustion in engines and gas turbines, owned by CERFACS and IFP Energies Nouvelles. It is used by multiple laboratories (IMFT in Toulouse, EM2C in Centralesupelec, TU Munich, Von Karmann Institute, ETH Zurich, etc) and companies (SAFRAN AIRCRAFT ENGINES, SAFRAN HELICOPTER ENGINES, ARIANEGROUP, HERAKLES, etc). It is also used as a usual benchmark code by many computing centers to test their machines. The code is managed using specialized tools: git for source management, Redmine to track users experience, bi annual release of new versions. 100 to 200 users work with AVBP in Europe and hundreds of different configurations are computed every year.

AVBP is also used today to compute turbomachinery (compressors and turbines) and to compute full engine configurations. Being able to compute simultaneously the compressor and the chamber of the chamber and the turbine or all three is now possible with AVBP. This is critical for multiple problems such as new propulsion concepts (such as Rotating Detonation Engines) or to study coupled phenomena such as the noise emitted from a gas turbine.

AVBP has always been at the forefront of HPC research at CERFACS: its efficiency has been verified up to 250 000 cores with grids of 2 to 4 billion cells. This was done through multiple PRACE (EUROPE) and INCITE (USA) CPU time allocations. This requires a continuous work on the code architecture itself. CERFACS collaborates with INTEL through an IPCC to continuously increase the performances of the solver. Collaborations with IBM and NVIDIA are also frequent. AVBP is used in 3 European Centers of Excellence: Excellerat, Combustion, and now RAISE.

AVBP is the baseline code for two European Research Council advanced grants lead by IMFT and CERFACS: INTECOCIS on thermoacoustics which ended in 2018, and currently SCIROCCO on hydrogen combustion technologies. It is now the main code used by CERFACS, SAFRAN TECH and SAFRAN HELICOPTER in two ITN Marie Curie projects focusing on instabilities and ignition in annular chambers: ANNULIGHT coordinated by NTNU and MAGISTER coordinated by Un. Twente.

Basilisk

Basilisk (http://basilisk.fr/), is a free software for the solution of partial differential equations on adaptive meshes, developed at CNRS, Institute Jean le Rond d’Alembert in Paris. It follows a finite volume methodology coupled to an iterative multigrid solver and introduces an extension of the C programming language for implementing discretization schemes on Cartesian grids. More specifically, it provides a range of pre-defined solvers for compressible and incompressible flows, electrohydrodynamics, viscoelasticity, reaction-diffusion problems, amongst others, as well as the option to implement solvers for even more complex simulations.

Specifically, for multiphase flows, the code uses a conservative volume-of-fluid method to distinguish between the liquid and the gas phases, retaining the effects of viscosity and density differences, as well as surface tension and gravity. The quadtree grid construction, allows for increased grid resolution in the region of the two-fluid interface. The time-integration of the Navier-Stokes equations follows a second-order predictor-corrector scheme, and the resulting Poisson equation for the pressure field is solved with a build-in multigrid solver. The code performance and scalability were analyzed on various HPC centers, such as the DEEP-EST and JUAWEI systems at Jülich, and the CTE-AMD system at BSC.

Video Caption: Basilisk simulation of a droplet spreading over a chemically heterogeneous surface, using adaptive mesh refinement to accurately capture the contact line dynamics.

Within RAISE Task 3.5, Basilisk is used for the generation of CFD datasets in wetting hydrodynamics scenarios, to be used for the development of AI-assisted surrogate models by augmenting low-accuracy models with a data-driven part. The goal is to learn the non-linear mappings between droplet trajectories and surface features in order to (i) enhance our insights about the morphology of surfaces and how droplets evolve on them, complementing related experimental efforts, and (ii) help accelerate parametric studies aimed towards designing surfaces in applications for controllable droplet transport.

m-AIA

The multi-physics code m-AIA (formerly called ZFS) is under active development since more than 15 years at the Institute of Aerodynamics (AIA) of RWTH Aachen University. It has been used successfully in various national and EU funded projects for the simulation of engineering flow and noise prediction problems. Individual solvers for multi-phase and turbulent, combusting flows, heat conduction and acoustic wave propagation can be fully coupled and run efficiently on HPC systems. The various methods include finite-volume solvers for, e.g., the Navier-Stokes equations, lattice Boltzmann methods and discontinuous Galerking schemes for the solution of acoustic perturbation equations. Boundary fitted, block structures meshes as well as hierarchical Cartesian meshes can be used. A fully conservative cut-cell method is used for the boundary formulation for immersed bodies, which are also allowed to move freely in the solution domain.

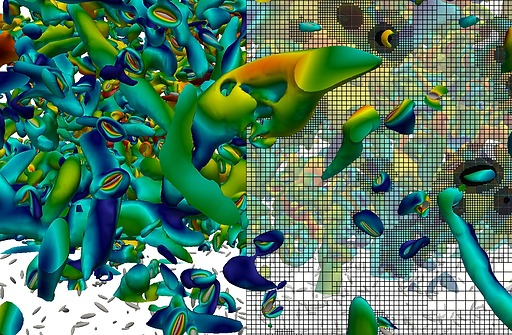

Simulation of particulate flows in the field of pulverized bio-fuel combustion. Interaction of a large number of fully resolved particles with non-spherical shape with a turbulent flow field. The figure shows the surfaces of the particles with the turblent flow structures and the solution adaptive Cartesian mesh on the right.

Dynamic load balancing algorithms have been implemented to achieve high-parallel efficiency, also for fully coupled solvers utilizing time varying and solution adaptive meshes. Heterogeneous HPC platforms can be used due to a CPU timer-based domain decompositioning. The postprocessing of large-scale simulations results has been performed by using Proper Orthogonal Decompositioning (POD) and Dynamic Mode Decompositioning (DMD) methods to identify modes in complex turbulent flows. Within this project, an application of m-AIA is planned in the field of optimization and optimum control for multi-physics applications based on AI and especially machine learning tools.

SImulation of the turbulent flow around an axial fan rotating at 3000 RPM. Visualization of the turbulent flow with the tip and hub vortices.